Copywriters are an essential part of any marketing team. However good your product is, no one will know about it without promotion. And promotion means copy, whether for Facebook ads or for blog posts. So the need for copywriters lingers. Hell, if it didn’t, I would be out of a job 😅

However, hiring a professional copywriter costs about $50k-$60k annually — at least. This made us think: is there a cheaper way to produce copy? Enter, artificial intelligence (AI) copywriting software. The market is replete with options which can “create human-like copy instantly” — or so the app developers assure us. Is that really so? We decided to find out. First things first though.

Why we picked this topic

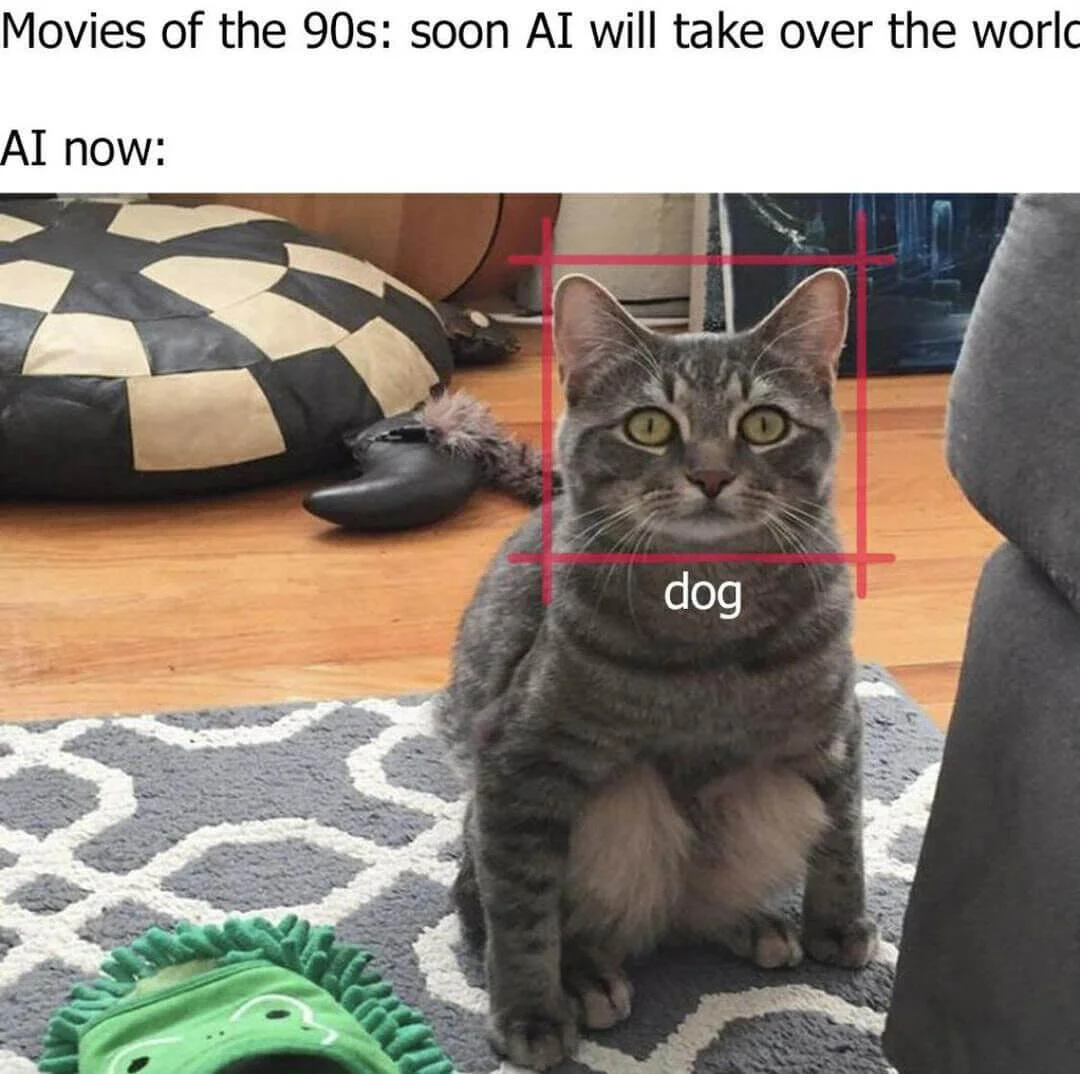

Big strides are being made in terms of automation around the world. Robots are taking over manufacturing, while AI might have developed to a point where it thinks of itself as a person.

Are more creative professions next in line? AI in design is already a thing, although there’s acceptance it won’t be able to replace human creators. Is the picture different for copywriters? Can AI generate ready-made texts? We took a deep dive on the subject to find out.

What is AI copywriting software exactly? How does it work?

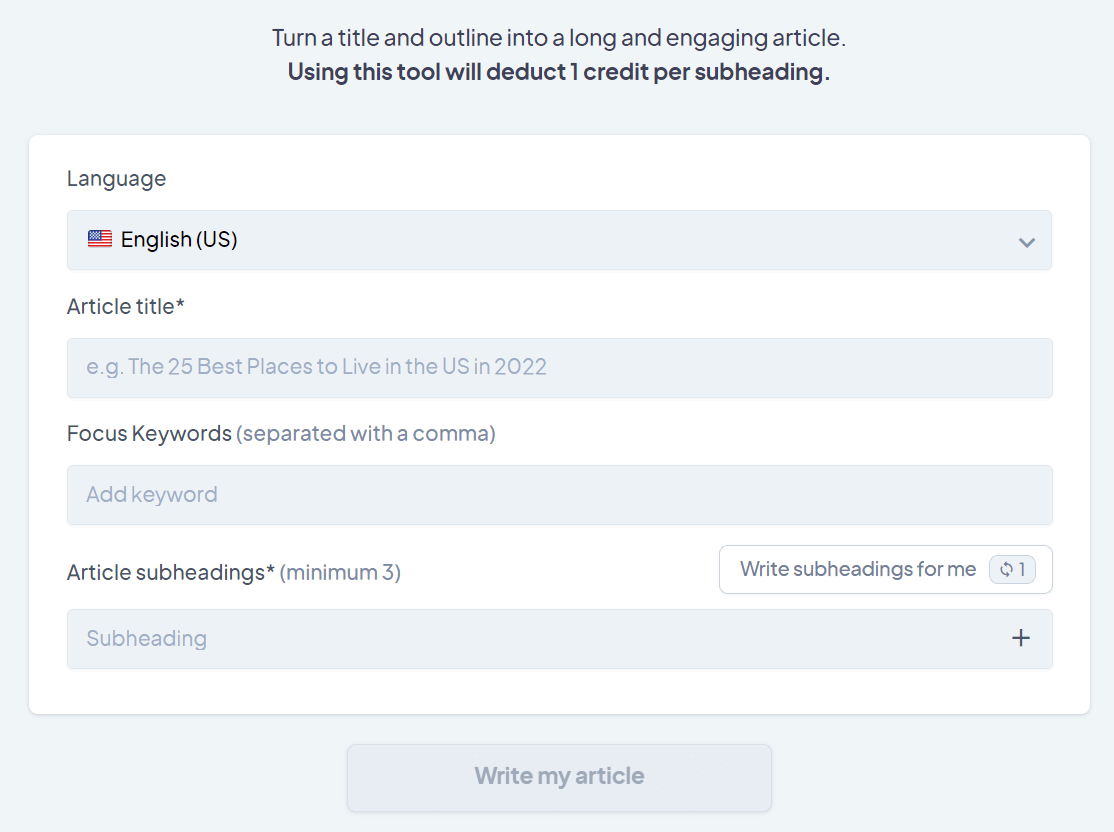

AI copywriting software comes in the form of apps, which allow users to input parameters (things like topic, structure and keywords), and then use an algorithm to produce human-like texts of various formats (ads, social media posts, emails, articles, etc.) Depending on the app you choose the input process differs slightly, but the end goal — producing human-like copy — remains the same.

The technology behind these apps differs somewhat. The current most popular algorithm at their core is called the Generative Pre-Trained Transformer 3 (GPT-3): a language model which is trained using the Internet and combines machine learning and natural language processing (NLP) to produce text.

Machine learning is how AI ‘learns’ by processing a large chunk of data — and making its own conclusions. Natural language processing puts together machine learning and linguistics for AI to be able to understand, and subsequently reproduce, human language.

How did we test the AI tools?

Our main criterion was simple: the ability to produce long-form meaningful content, i.e. articles or blog posts. It’s what we do here in the Selzy blog after all: put together detailed articles on different aspects of email marketing.

On a more abstract level, long-form content is also the hardest to create: it takes a lot of time to research and write. So if AI apps can’t tackle the biggest problem, why bother with them?

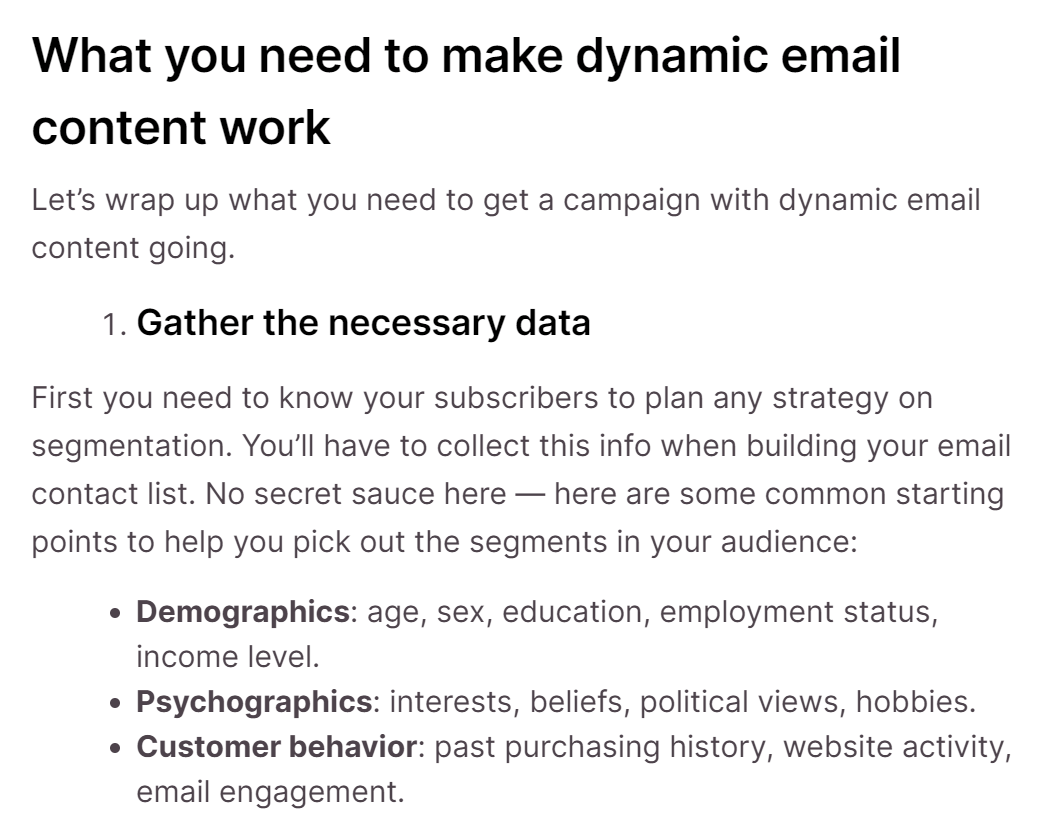

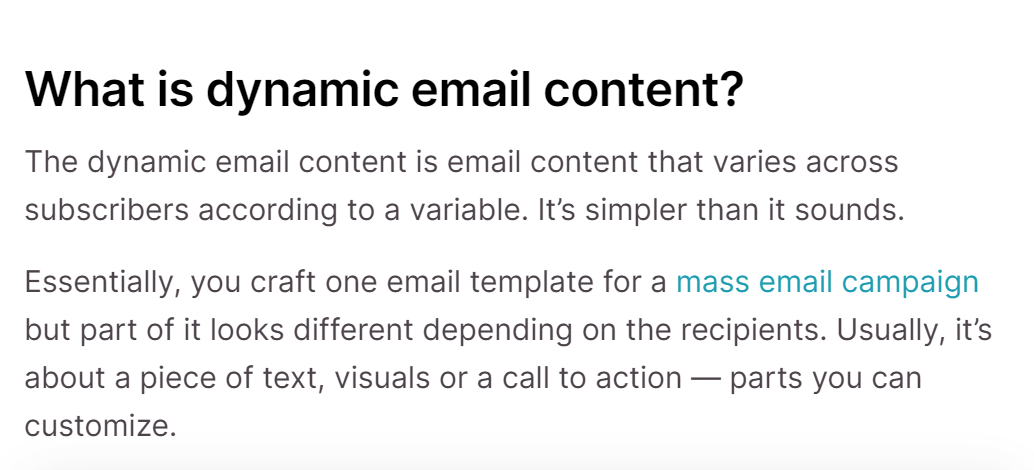

After deciding that we wanted to try dabbling with long-form content only, we then picked a topic, representative of what we write at Selzy and that was already covered in our blog, for comparison purposes. The topic we chose — dynamic email content — is not overloaded with technical terms and details which made AI’s job a little easier. Another nod to AI is that the internet is rife with info on the subject, so there is plenty of material to go on.

Finally, we agreed that a good article should be:

- Topical: after you input data, the tool comes up with a relevant to the topic text. That is the most important parameter, of course.

- Coherent, i.e. parts should be logically interlinked and tied into the overall structure.

- Factually correct: definitions, numbers, examples, etc. need to be on point.

- Error-free: with grammar and punctuation spot-on.

We then gave each app marks on each parameter, from 1 to 5.

We also considered the ease of use: how much effort should go into inputting data for an article to be generated.

Finally, we tested each article for plagiarism using Copywritely and used it as a tie-breaker.

What tools we chose and what we managed to find out

We cross-referenced the most popular AI copywriting tools on Capterra and TrustPilot — and then added a sprinkle of our own judgment. For example, Copy AI is a popular solution which was absent from Capterra — but we tested it anyway. Incidentally, Copy AI claims that the Ogilvy & Mather advertising agency uses them. Wonder what David Ogilvy himself would have to say about that 🤔

Here’s what we narrowed down our selection to:

Disclaimer: we relied on free trials of every app bar Frase. We don’t think it influenced the results much, because, with few exceptions, all tools are available during trial periods. However, we can’t know what’s behind the paywall for sure, so we feel obligated to mention it.

Let’s get into it, shall we?

Anyword

Anyword has a free version that you can enjoy with only one limit of 1000 words per month. The Basic plan ups the ceiling to 20,000 words per month (for $24) and throws in 30 extra languages. The Data-Driven plan will offer you 30,000 words per month (for $83) and the ability to rewrite ready texts. The reason we’re talking about plans in detail is to illustrate that content quality is unlikely to get better even if you pay more. You will get some fancy tools but nothing that can majorly affect the quality of writing.

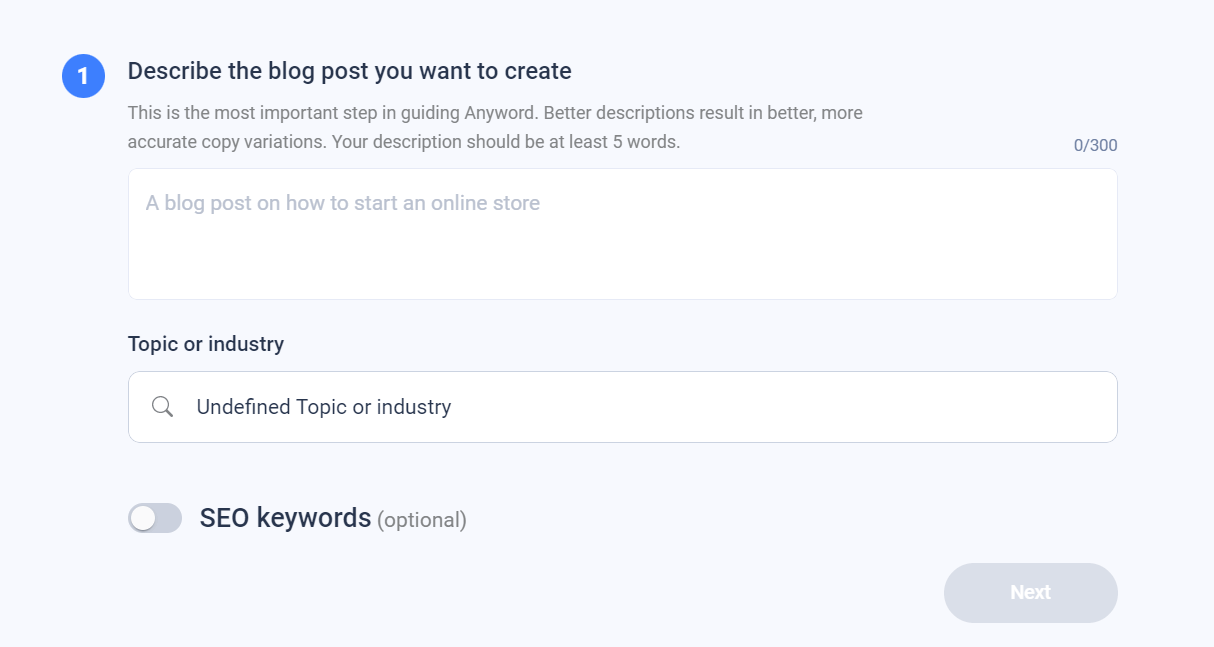

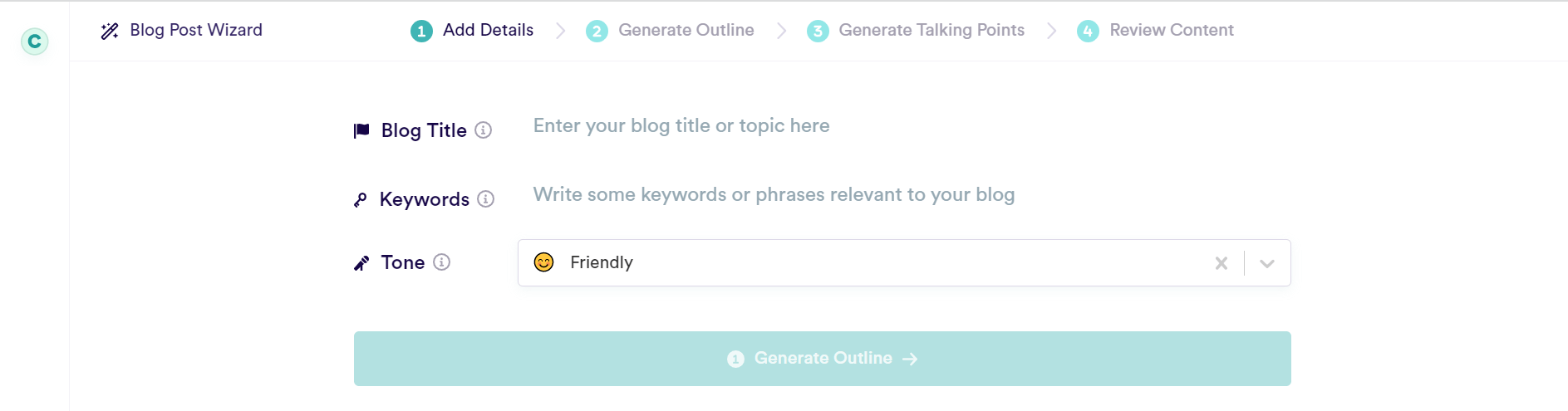

Anyword has only one tool for long-form content, called the Blog Wizard. The process of creating a post is fairly straightforward: you describe the topic, choose the field/industry and throw in some keywords (optional).

The app then generates three titles for you to choose from, a post outline and three intro paragraphs. It all looked easy enough and professional, and we couldn’t wait to see the result.

We were utterly disappointed. What we got at first was an outline of a post (title and paragraphs headings), but no text. Anyword offered us 2 choices: 1) start writing ourselves and it’d pick up or 2) generate paragraphs of text automatically. We didn’t want to make its job easier: so we picked the automatic option.

The tool produced a text of around 600 words which was half-relevant to the topic at best..

Topicality (2/5): Anyword scored two points (and not fewer) for several reasons:

- Benefits of using dynamic content & common mistakes were also mentioned correctly, although the list was brief.

- The topic definition, despite being narrow, was at least partially right.

However, the tool was wide of the mark a lot too:

- Instead of describing what data you need to make dynamic content in emails work, Anyword blabbed about RSS feeds — for absolutely no good reason.

- Anyword failed to retrieve examples of successful email campaigns with dynamic content and instead talked about personalization

Coherence (2/5): needless to say, going off-top on so many occasions affected coherence too. The first three paragraphs (intro, definition, benefits) were logically linked, but the rest was not. Anyword remembered what it was supposed to write about in the last paragraph, but it was too little too late.

Factuality (2/5): here is a list of instances where Anyword was either wrong or lacking. This long list cost the tool three points:

- The definition of dynamic email content was very narrow: text was the only thing Anyword considered a variable in a dynamic email.

- The benefits of using dynamic emails were watered down (Anyword only briefly mentioned personalization).

- The tool never got around to describing which types of dynamic content there are, instead churning out in essence another intro paragraph.

- The passage about common mistakes when using dynamic email content was at least partially right, but by that time Anyword had a mountain to climb to come off as a credible long-form AI tool.

Error-free (4.5/5): there were a couple of grammatical errors but nothing too serious from a grammatical and punctuation standpoint.

Conclusion: the text was mostly nonsensical. Some paragraphs were related to the topic, but most were not. It would need a lot of input from a human writer and an editor to process the article into readable content.

Overall mark: 10.5/20

Copy AI

Here is what we thought about it.

Topicality (4/5), there was a hiccup in the second-to-last paragraph which was only slightly relevant — and one strange bit about A/B testing.

Coherence (3.5/5): the text was logically linked and looked consistent. But the mentioned irrelevant paragraph and A/B testing affected coherence as much as topicality. Copy AI also failed to deliver on its promise of including examples of successful campaigns — one mentioned in the intro paragraph.

Factuality (3/5): arguably the weakest part of the tool. The definition of dynamic emails originally came off as very narrow, before the tool corrected itself by talking about different content types. Copy AI also went on about A/B testing for some reason. A factual mistake as much as a topical mistake: dynamic content still resides inside one campaign, not two. You can’t run A/B tests within the same campaign based around dynamic content.

Error-free (4.5/5): strictly speaking, there were some mistakes, but they were really minor. Deducting a point for it seemed cruel, so we went only half a point down.

Conclusion: the resulting text will still take some time and effort from a human editor to correct, but not much. We were disappointed to see some slight hiccups which ruined the overall very good impression. However, as a bonus, Copy AI pleasantly surprised us by returning a 100% unique text.

Overall mark: 15/20

Scalenut

Anyword was ranked highly on TrustPilot, while Scalenut was one of the best on Capterra. In both instances we have no idea why. Let me put it this way: maybe they are better-suited for producing short-form content. But both failed pretty miserably at creating a blog post. Scalenut actually managed to bomb so hard that we had to go back and revise our opinion on Anyword — if only just by a couple of points.

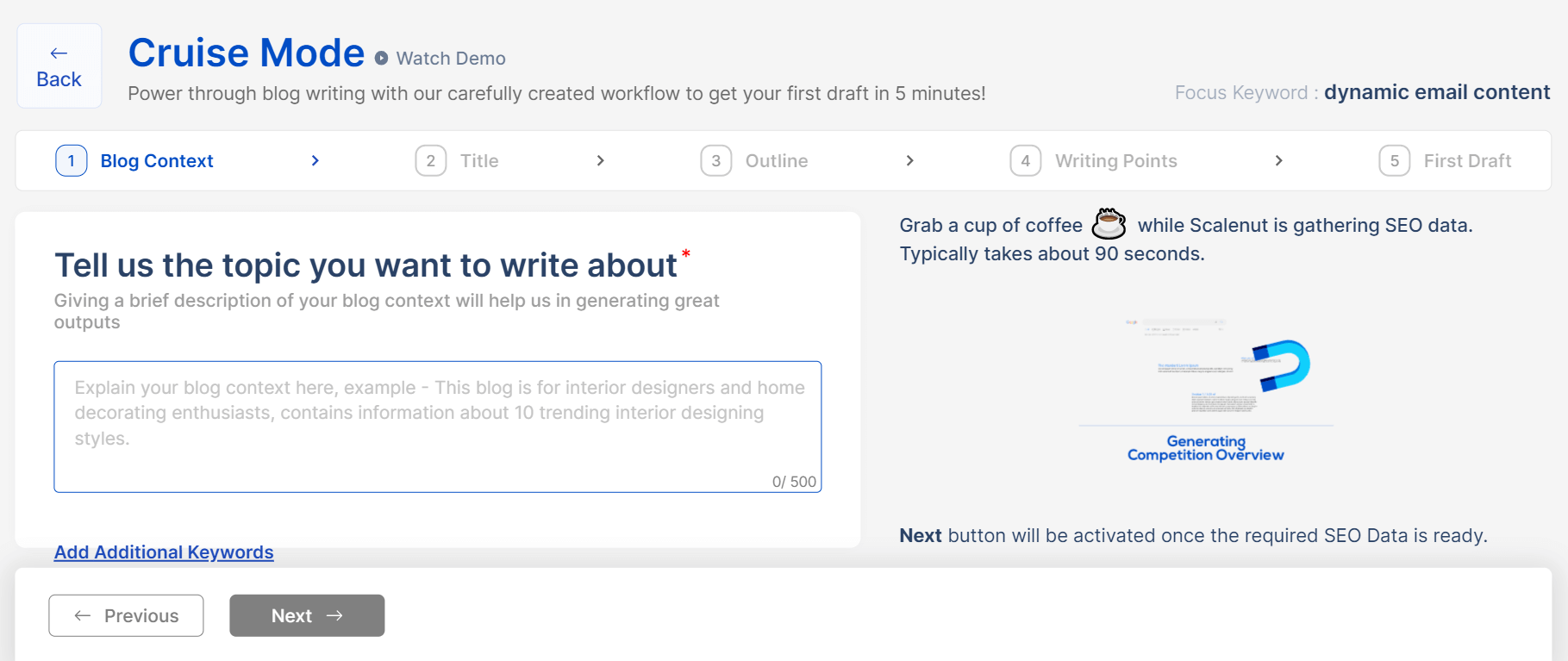

Scalenut was almost abusive too during the process of creating a blog post. It ranked our actions every step of the way (creating a description, choosing a title, intro, etc.), almost forced us to come up with keywords for SEO, only to then churn out a barely readable post of roughly 700 words.

Here’s what we thought about it:

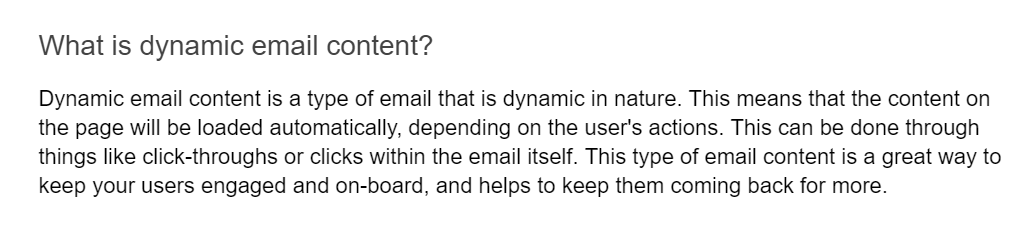

Topicality (1/5): Scalenut’s algorithm has heard of dynamic email content, but it seems only as an unrelated and separate set of words. So it went ahead and threw these words at the wall to see what sticks. Little did. We couldn’t give it a lower mark.

Coherence (2/5): paragraphs were not linked, although the sentences inside them were. It was not a total bust, but very close to it.

Factuality (1/5): it got almost everything wrong. The definition, benefits, types — and even common mistakes marketers make when using dynamic email content were inaccurate.

Error-free (4.5/5): grammar was almost entirely spot-on, although there were a couple of iffy sentences — and one instance where three sentences in a row started the same way.

Conclusion: Apparently Scalenut thought that filling the article with words “dynamic” and “engaging” was the answer, enough to make the post sound professional. It was anything but. From the moment it got the definition of dynamic email content wrong we knew the game was up.

The frustrating thing is that analytics behind Scalenut look impressive. The tool understands the optimal length for a post, pulls up examples from the internet of articles on the subject, analyzes their structure correctly. Only to completely fail the task of combining its knowledge into readable text. Give Scalenut a wide berth if you need a long(ish) article.

Overall mark: 8.5/20

Copymatic

This is where we cheated a bit. Instead of going for the “Blog Post Writer” feature — which looked utter garbage at the stage of data input already (the title and subheadings Copymatic suggested were off-top) — we went with their “Article Generator”. It’s quite simple to use: you only need a topic description and an outline. Keywords are optional.

And while the content this time around was not without flaws, it was much better than Anyword’s and Scalenut’s attempts. And clocked over 1000 words too, instead of the usual 600-700.

Here’s our verdict.

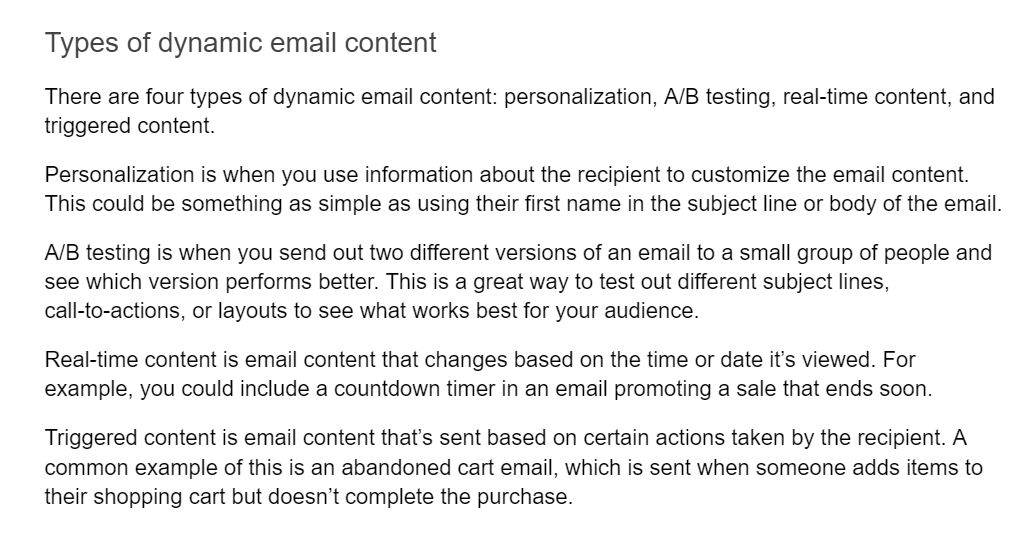

Topicality (4/5): Copymatic correctly recognised what it is we wanted to write about and produced a fully-fledged article on the subject. However, it went off-top when describing types of dynamic email content, so we deducted a point.

Coherence (4/5): the main complaint is that Copymatic promised examples of dynamic email campaigns in the intro paragraph — and never delivered. Text under subheadings also looked a bit disjointed at times.

Factuality (3.5/5): the definition was once again pretty narrow. However the biggest problem was the plain wrong section on the types of dynamic email content.

Error-free (5/5): we didn’t find any mistakes of note.

Conclusion: the Article Generator feature looks really advanced when it comes to producing long-form content, even over the standard 600-700 words most competitors offer. It’s still not ready for publishing “as is”, however — there was at least one glaring flaw.

Overall mark: 16.5/20

Frase.io

Frase is the only tool we tested that doesn’t have the Generative Pre-Trained Transformer 3 (GPT-3) at its core. We were curious why that is and the answer seems simple: GPT-3’s more advanced versions are not as affordable as GPT-J, which is open source — and free — and which Frase is based upon.

Furthermore, it appears that GPT-J can continuously learn, while GPT-3 earlier versions are basically stuck in 2020, back when they were created. However, GPT-J looks like a half-measure: better than some versions of its competition (Ada and Babbage), but worse than the latest (Curie and Davinci).

How did it influence the results? In short, Frase outperformed the admittedly low-quality Anyword and Scalenut, but did worse than Copy AI and Copymatic.

Here’s what we thought of it:

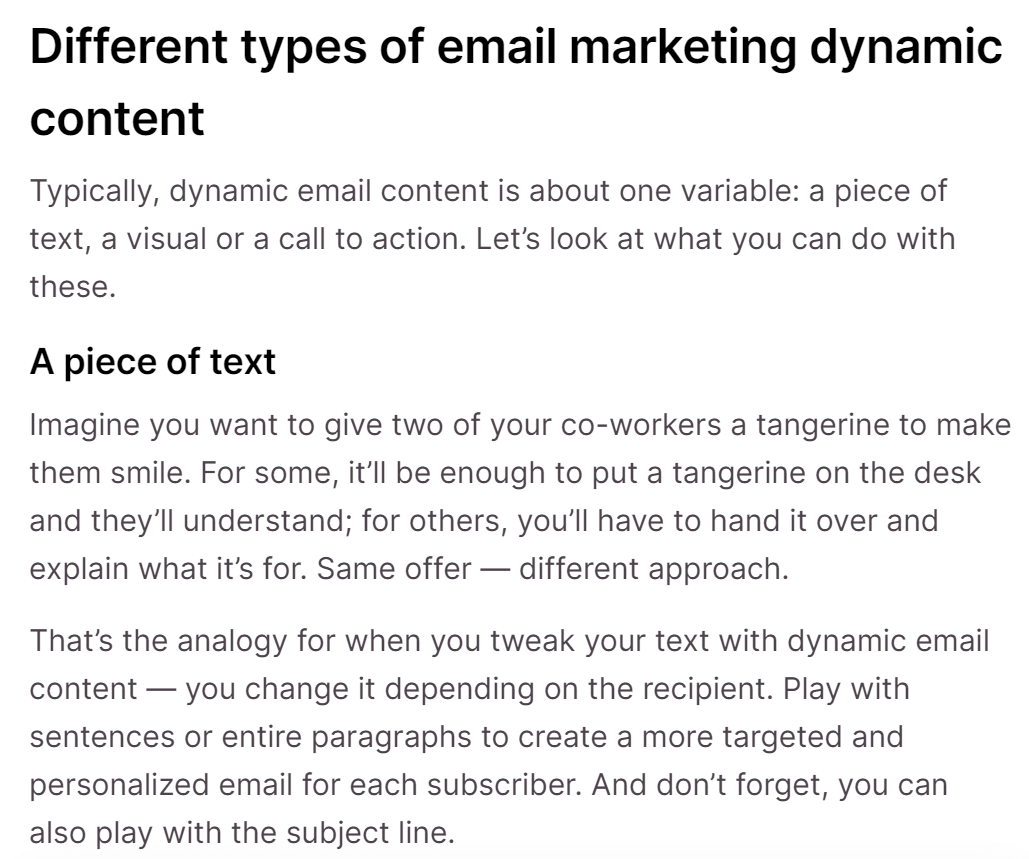

Topicality (3/5): It stayed mostly on topic, although the introduction had more to do with email marketing as a whole, and not specifically email content. And once again the AI bot just failed to grasp the types of dynamic content.

Coherence (3/5): The text resembled more a bunch of paragraphs, and not a commonly-threaded theme — just the way the data was input. As if the bot produced text for separate unrelated paragraphs, and not an article. It was just lucky that the subheadings for these paragraphs were written by a human.

Factuality (4/5): We are a bit tired of AI bots getting types of content wrong but there still was a factual mistake. Frase seemed to think the article was more about types of emails and email marketing, than it is about dynamic content within.

Error-free (5/5): No grammar mistakes were made. Punctuation was on point, one slight hiccup aside. But as we all know, Grammar is the easiest thing to get right.

Conclusion: The tool needed more input data to produce an article (we had to write the description and subheadings in full myself) and still struggled in some places. We are a bit surprised it’s tied for 2nd with Copy AI, truth be told, so the tie-breaker kicks in: Frase’s originality score was much lower than Copy AI’s.

Overall mark: 15/20

Summing it all up

So, can AI copywriting tools replace human copywriters? The short answer is no.

The elaborate answer is: while some bots are closer to producing human-like content, even they will need editing work done. None of the content was ready to go as-is and one major flaw they all shared was going off-top for a piece of the article: dynamic email content types.

And this systemic flaw looks to be down to the algorithm most AI bots we tested have in common: the GPT-3 method. One of its drawbacks is misinterpretation of input data, and even though we refined it on several occasions, the end result was the same.

If you have to pick one tool for long-form content, we’d advise to rely on Copymatic: its Article Generator feature is rare among its competitors and mostly spot-on. Copy AI can serve you in good stead if you are after shorter blog posts. It’s more expensive, but its blog wizard is certainly better than Copymatic’s. Frase is neither awful, nor particularly impressive — and we’d definitely stay away from Anyword and Scalenut.

Finally, it’d be remiss of us not to mention we’ve only examined one specific function — generating long-form content — but even here there are things we didn’t test for. Things like SEO, sticking to a strict tone of voice brands might require, putting on a different angle depending on the aim the text is pursuing…

It’d be an even sterner test and we are not sure even the best tools on offer (Copy AI and Copymatic) would pass it. In short, we don’t think they are at a level to fully replace humans.